Lockbox

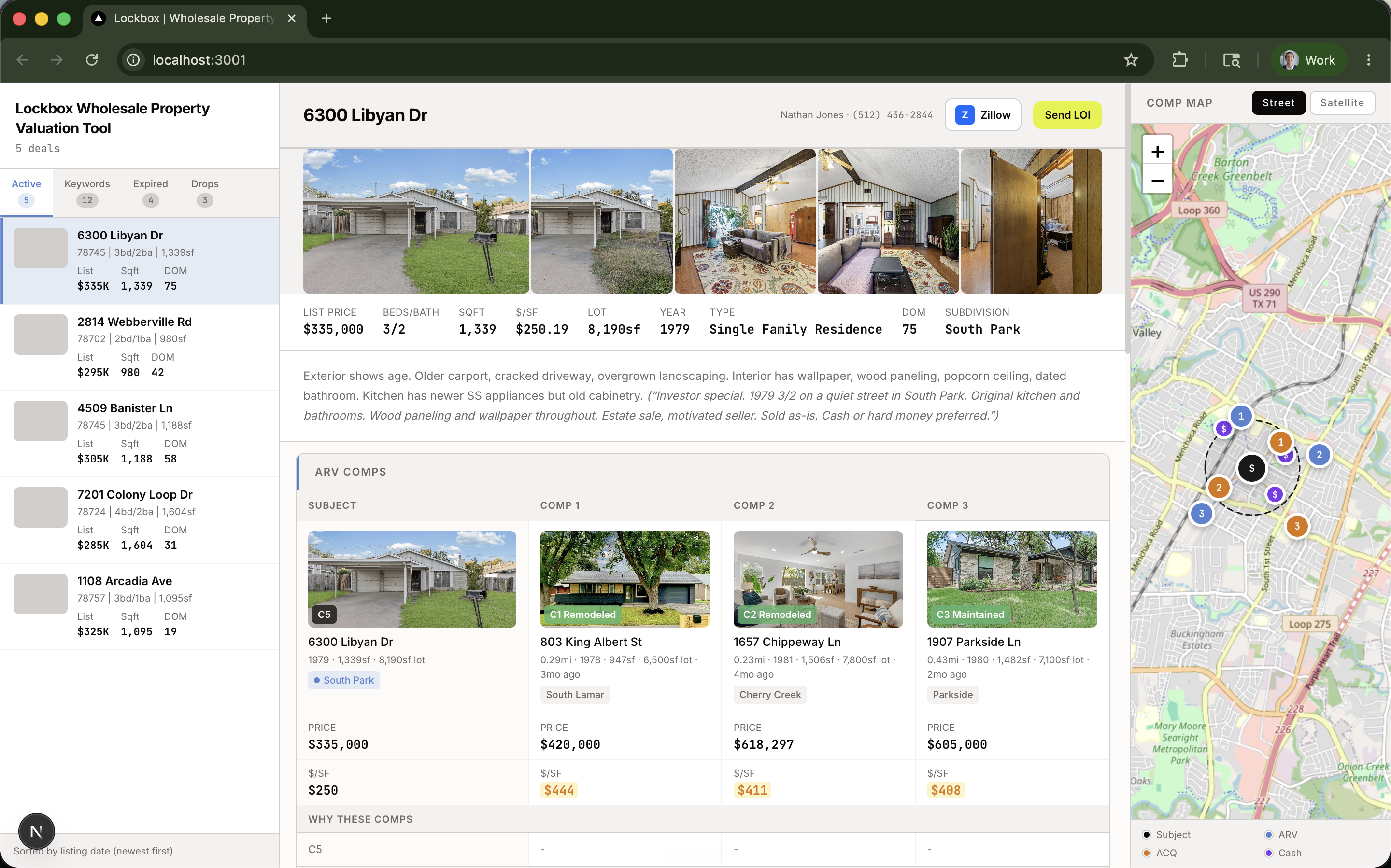

A wholesale real estate platform to run comps and make offers. It pulled every active MLS listing in a market, AI-scored the photos for renovation potential, and ran comparables based on current value and after-repair value.

Outside of work, I enjoy real estate investing. Unfortunately for me, Texas is a non-disclosure state, meaning sale prices aren't part of public record the way they are in most of the country.

Realtors have exclusive access to this data via the MLS. They use it as a way to convey their value.

Luckily for me, a family member runs a wholesale real estate brokerage in Texas. He's a professional and does 50+ transactions a year. His brokerage license allowed me to create an API key and access the data myself.

Wholesalers today comb the MLS, review recent listings, and manually check photos, recent sales, square footage, and a list of nuanced factors that go into a real estate evaluation. The best wholesalers review the MLS like a hawk. I built the tool that automates that process.

First step was connecting to the API. I started with a couple of zip codes to keep the scope narrow. Pretty quickly I hit rate limits and had to figure out how to navigate them. From there I kept testing what was actually available in the API — what fields, what depth, what was reliable.

Once the data was flowing, the question was which model to ingest it with. The core users here all start with the photos, so vision models were the right call. I tested Gemini Flash 2.5 head-to-head against Qwen 3.5 Flash and a couple of others. Gemini won on cost and accuracy.

Photos get scored on the C1 to C6 scale, the same language a professional real estate appraiser would use. Anything below C3 flags potential flip or investment value. Those properties go into the comp pipeline.

The comp pipeline measures two things. First, current value — what comparable properties have sold for in a radius, with similar house size, lot size, and condition. Second, after-repair value — what fully renovated homes closer to C1 or C2 have sold for in the same radius. The delta between offer price and the after-repair value is where the deal lives. From there you can back into whether something's actually a deal, and what number you'd need to hit to make it one.

A lot of the heavy lifting on this comes from open data. The map is Leaflet on top of OpenStreetMap tiles. The busy-road detector is pure OSM — a one-time pull of every primary, secondary, and tertiary highway in the South Austin bounding box via the Overpass API, cached to a static CSV the server reads at runtime. Wholesalers told me properties near a busy road throw comps $50–100K and the comp engine had no way to see it. Now every property gets a distance-to-nearest-arterial check and renders an "on busy road" or "near busy road" flag right next to the address.

The hardest part was building a comp algorithm wholesalers would trust. The comp number is sensitive. Every wholesaler thinks they're a professional at it. Every one has their own approach and their own intuition, especially since they pride themselves on local knowledge and neighborhood knowledge.

The other hard part was design and visual hierarchy. I mirrored Ramp's design language — pulled their page source through dev tools and rebuilt the components in Tailwind — and even with a good starting point, it took a long time to figure out what to surface first, what's a sidebar number, what's a hero number, what's a tooltip. Every screen had to follow the user's intent in the order a wholesaler actually checks things, so they could get to a number they trust, fast. If they don't trust the number, they don't use the tool.

A few lessons I learned:

- Navigating APIs at scale is a different problem than calling one a few times. Rate limits, pagination quirks, response drift between endpoints.

- If you don't know what to build, go talk to your users. Then talk to them again. Build it, get feedback. Build it, get feedback. It's easy to spin your wheels in cycles. Pick a real end user and lean on their industry wisdom.

- You eventually get to a point where building is the easy part. Selling is the hard part.

How it's built

Pipeline

Stack

Notes

Texas being a non-disclosure state is the whole reason this project existed. In most states, sale prices are public record. Here, they're not. That gap is the thing realtors get paid to bridge, and it's what made the data feel worth chasing.

I still think there's a smart but large-enough market opportunity here to create a fun lifestyle business. It just didn't feel like the opportunity for me.